-

- Description:

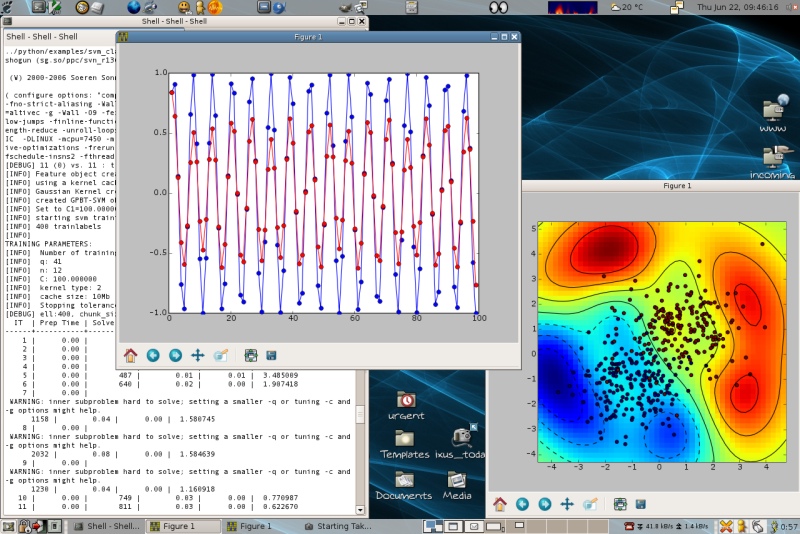

The SHOGUN machine learning toolbox's focus is on large scale kernel methods and especially on Support Vector Machines (SVM). It comes with a generic interface for SVMs, features several SVM and kernel implementations, includes LinAdd optimizations and also Multiple Kernel Learning algorithms. SHOGUN also implements a number of linear methods. It allows the input feature-objects to be dense, sparse or strings and of type int/short/double/char.

The toolbox not only provides efficient implementations of the most common kernels, like the

- Linear,

- Polynomial,

- Gaussian and

- Sigmoid Kernel

but also comes with a number of recent string kernels as e.g. the

- Locality Improved,

- Fischer,

- TOP,

- Spectrum,

- Weighted Degree Kernel (with shifts).

For the latter the efficient LINADD optimizations are implemented. Also SHOGUN offers the freedom of working with custom pre-computed kernels. One of its key features is the combined kernel which can be constructed by a weighted linear combination of a number of sub-kernels, each of which not necessarily working on the same domain. An optimal sub-kernel weighting can be learned using Multiple Kernel Learning. Currently SVM 2-class classification and regression problems can be dealt with. However SHOGUN also implements a number of linear methods like

- Linear Discriminant Analysis (LDA)

- Linear Programming Machine (LPM),

- (Kernel) Perceptrons and features algorithms to train hidden markov models.

The input feature-objects can be

- dense

- sparse or

- strings and of type int/short/double/char

and can be converted into different feature types. Chains of preprocessors (e.g. substracting the mean) can be attached to each feature object allowing for on-the-fly pre-processing.

SHOGUN is implemented in C++ and interfaces to Matlab(tm), R, Octave and Python.

- Changes to previous version:

This release contains several cleanups and enhancements:

Features:

- Support all data types from python_modular: dense, scipy-sparse csc_sparse matrices and strings of type bool, char, (u)int{8,16,32,64}, float{32,64,96}. In addition, individual vectors/strings can now be obtained and even changed. See examples/python_modular/features_*.py for examples.

- AUC maximization now works with arbitrary kernel SVMs.

- Documentation updates, many examples have been polished.

- Slightly speedup Oligo kernel.

Bugfixes:

- Fix reading strings from directory (f.load_from_directory()).

- Update copyright to 2009.

Cleanup and API Changes:

- Remove {Char,Short,Word,Int,Real}Features and only ever use the templated SimpleFeatures.

- Split up examples in examples/python_modular to separate files.

- Now use s.set_features(strs) instead of s.set_string_features(strs) to set string features.

- The meaning of the width parameter for the Oligo Kernel changed, the OligoKernel has been renamed to OligoStringKernel.

- BibTeX Entry: Download

- Corresponding Paper BibTeX Entry: Download

- Supported Operating Systems: Cygwin, Linux, Macosx

- Data Formats: Plain Ascii, Svmlight

- Tags: Bioinformatics, Large Scale, String Kernel, Kernel, Kernelmachine, Lda, Lpm, Matlab, Mkl, Octave, Python, R, Svm

- Archive: download here

Comments

-

- Soeren Sonnenburg (on September 12, 2008, 16:14:36)

- In case you find bugs, feel free to report them at [http://trac.tuebingen.mpg.de/shogun](http://trac.tuebingen.mpg.de/shogun).

-

- Tom Fawcett (on January 3, 2011, 03:20:48)

- You say, "Some of them come with no less than 10 million training examples, others with 7 billion test examples." I'm not sure what this means. I have problems with mixed symbolic/numeric attributes and the training example sets don't fit in memory. Does SHOGUN require that training examples fit in memory?

-

- Soeren Sonnenburg (on January 14, 2011, 18:12:01)

- Shogun does not necessarily require examples to be in memory (if you use any of the FileFeatures). However, most algorithms within shogun are batch type - so using the non in-memory FileFeatures would probably be very slow. This does not matter for doing predictions of course, even though the 7 billion test examples above referred to predicting gene starts on the whole human genome (in memory ~3.5GB and a context window of 1200nt was shifted around in that string). In addition one can compute features (or feature space) on-the-fly potentially saving lots of memory. Not sure how big your problem is but I guess this is better discussed on the shogun mailinglist.

-

- Yuri Hoffmann (on September 14, 2013, 17:12:16)

- cannot use the java interface in cygwin (already reported on github) nor in debian.

Leave a comment

You must be logged in to post comments.

Project details for SHOGUN